Siamese原意是”泰国的,泰国人”,而与之相关的一个比较常见的词是”Siamese twin”, 意思是是”连体双胞胎”,所以Siamemse Network是从这个意思转变而来,指的是结构非常相似的两路网络,分别训练,但共享各个层的参数 ,在最后有一个连接的部分。Siamese网络对于相似性比较的场景比较有效。此外Siamese因为共享参数,所以能减少训练过程中的参数个数。这里 的slides讲解了Siamese网络在深度学习中的应用。这里我参照Caffe中的Siamese文档 , 以LeNet为例,简单地总结下Caffe中Siamese网络的prototxt文件的写法。

1. Data层 Data层输入可以是LMDB和LevelDB格式的数据,这种格式的数据可以通过参照$CAFFE_ROOT/examples/siamese/create_mnist_siamese.sh来生成(该脚本是从MNIST原先的格式生成DB文件,如果要从JPEG格式的图片来生成DB文件,需要进行一定的修改)。pair_data,表示配对好的图片对;另一个是sim,表示图片对是否属于同一个label。

2. Slice层 Slice层是Caffe中的一个工具层,功能就是把输入的层(bottom)切分成几个输出层(top)。官网给出的如下例子:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 layer { name: "slicer_label" type : "Slice" bottom: "label" top: "label1" top: "label2" top: "label3" slice_param { axis: 1 slice_point: 1 slice_point: 2 } }

完成的功能就是把slicer_label划分成3份。axis表示划分的维度,这里1表示在第二个维度上划分;slice_point表示划分的中间的点,分别是1,2表示在1-2层和2-3层之间进行一个划分。

3. 共享层 后面的卷积层,Pooling层,Relu层对于两路网络是没有区别的,所以可以直接写好一路后,复制一份在后面作为另一路,不过得将name,bottom和top的名字改成不一样的(示例中第二路的名字都是在第一路对应层的名字后面加了个_p表示pair)。

4. 如何共享参数 两路网络如何共享参数呢?Caffe里是这样实现的:在每路中对应的层里面都定义一个同名的参数,这样更新参数的时候就可以共享参数了。如下面的例子:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 ... layer { name: "ip2" type : "InnerProduct" bottom: "ip1" top: "ip2" param { name: "ip2_w" lr_mult: 1 } } ... layer { name: "ip2_p" type : "InnerProduct" bottom: "ip1_p" top: "ip2_p" param { name: "ip2_w" lr_mult: 1 } } ...

上面例子中,两路网络对应层都定义了ip2_w的参数,这样训练的时候就可以共享这个变量的值了。

5. feature层 在2个全连接层后,我们将原来的分类的sofatmax层改为输出一个2维向量的全连接层:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 layer { name: "feat" type : "InnerProduct" bottom: "ip2" top: "feat" param { name: "feat_w" lr_mult: 1 } param { name: "feat_b" lr_mult: 2 } inner_product_param { num_output: 2 weight_filler { type : "xavier" } bias_filler { type : "constant" } } }

6. ContrastiveLoss层 在两个feature产生后,就可以利用2个feature和前面定义的sim来计算loss了。Siamese网络采用了一个叫做“ContrastiveLoss” 的loss计算方式,如果两个图片越相似,则loss越小;如果越不相似,则loss越大。

1 2 3 4 5 6 7 8 9 10 11 layer { name: "loss" type : "ContrastiveLoss" bottom: "feat" bottom: "feat_p" bottom: "sim" top: "loss" contrastive_loss_param { margin: 1 } }

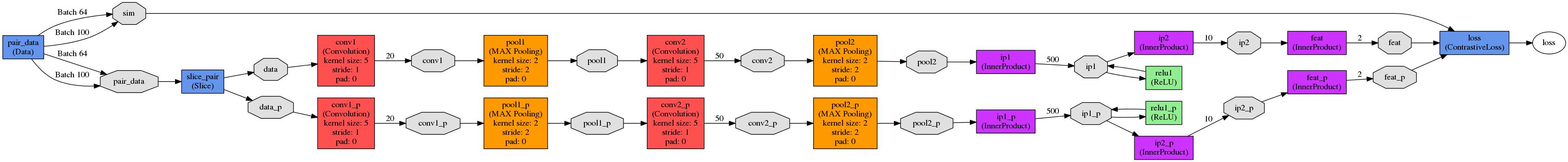

7. 网络结构的可视化 上面就是所有的网络结构,利用$CAFFE_ROOT/python/draw_net.py这个脚本可以画出网络结构,如图所示:

整个网络的完整内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 name: "mnist_siamese_train_test" layer { name: "pair_data" type : "Data" top: "pair_data" top: "sim" include { phase: TRAIN } transform_param { scale: 0.00390625 } data_param { source : "examples/siamese/mnist_siamese_train_leveldb" batch_size: 64 } } layer { name: "pair_data" type : "Data" top: "pair_data" top: "sim" include { phase: TEST } transform_param { scale: 0.00390625 } data_param { source : "examples/siamese/mnist_siamese_test_leveldb" batch_size: 100 } } layer { name: "slice_pair" type : "Slice" bottom: "pair_data" top: "data" top: "data_p" slice_param { slice_dim: 1 slice_point: 1 } } layer { name: "conv1" type : "Convolution" bottom: "data" top: "conv1" param { name: "conv1_w" lr_mult: 1 } param { name: "conv1_b" lr_mult: 2 } convolution_param { num_output: 20 kernel_size: 5 stride: 1 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "pool1" type : "Pooling" bottom: "conv1" top: "pool1" pooling_param { pool: MAX kernel_size: 2 stride: 2 } } layer { name: "conv2" type : "Convolution" bottom: "pool1" top: "conv2" param { name: "conv2_w" lr_mult: 1 } param { name: "conv2_b" lr_mult: 2 } convolution_param { num_output: 50 kernel_size: 5 stride: 1 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "pool2" type : "Pooling" bottom: "conv2" top: "pool2" pooling_param { pool: MAX kernel_size: 2 stride: 2 } } layer { name: "ip1" type : "InnerProduct" bottom: "pool2" top: "ip1" param { name: "ip1_w" lr_mult: 1 } param { name: "ip1_b" lr_mult: 2 } inner_product_param { num_output: 500 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "relu1" type : "ReLU" bottom: "ip1" top: "ip1" } layer { name: "ip2" type : "InnerProduct" bottom: "ip1" top: "ip2" param { name: "ip2_w" lr_mult: 1 } param { name: "ip2_b" lr_mult: 2 } inner_product_param { num_output: 10 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "feat" type : "InnerProduct" bottom: "ip2" top: "feat" param { name: "feat_w" lr_mult: 1 } param { name: "feat_b" lr_mult: 2 } inner_product_param { num_output: 2 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "conv1_p" type : "Convolution" bottom: "data_p" top: "conv1_p" param { name: "conv1_w" lr_mult: 1 } param { name: "conv1_b" lr_mult: 2 } convolution_param { num_output: 20 kernel_size: 5 stride: 1 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "pool1_p" type : "Pooling" bottom: "conv1_p" top: "pool1_p" pooling_param { pool: MAX kernel_size: 2 stride: 2 } } layer { name: "conv2_p" type : "Convolution" bottom: "pool1_p" top: "conv2_p" param { name: "conv2_w" lr_mult: 1 } param { name: "conv2_b" lr_mult: 2 } convolution_param { num_output: 50 kernel_size: 5 stride: 1 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "pool2_p" type : "Pooling" bottom: "conv2_p" top: "pool2_p" pooling_param { pool: MAX kernel_size: 2 stride: 2 } } layer { name: "ip1_p" type : "InnerProduct" bottom: "pool2_p" top: "ip1_p" param { name: "ip1_w" lr_mult: 1 } param { name: "ip1_b" lr_mult: 2 } inner_product_param { num_output: 500 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "relu1_p" type : "ReLU" bottom: "ip1_p" top: "ip1_p" } layer { name: "ip2_p" type : "InnerProduct" bottom: "ip1_p" top: "ip2_p" param { name: "ip2_w" lr_mult: 1 } param { name: "ip2_b" lr_mult: 2 } inner_product_param { num_output: 10 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "feat_p" type : "InnerProduct" bottom: "ip2_p" top: "feat_p" param { name: "feat_w" lr_mult: 1 } param { name: "feat_b" lr_mult: 2 } inner_product_param { num_output: 2 weight_filler { type : "xavier" } bias_filler { type : "constant" } } } layer { name: "loss" type : "ContrastiveLoss" bottom: "feat" bottom: "feat_p" bottom: "sim" top: "loss" contrastive_loss_param { margin: 1 } }

8. 训练过程 训练过程与别的网络是一样的,这里就不具体展开了。

9. 参考内容

https://www.quora.com/What-are-Siamese-neural-networks-what-applications-are-they-good-for-and-why http://vision.ia.ac.cn/zh/senimar/reports/Siamese-Network-Architecture-and-Applications-in-Computer-Vision.pdf